Project Page

Fail2Drive: Benchmarking Closed-Loop Driving Generalization

Fail2Drive is the first CARLA benchmark designed to test closed-loop generalization on truly unseen long-tail scenarios. By pairing each shifted route with an in-distribution counterpart, it exposes substantial hidden failures in current state-of-the-art driving models.

Explore the Fail2Drive benchmark, run evaluations, and build new scenarios with the toolbox.

Motivation

As autonomous driving moves closer to real-world deployment, the focus is shifting toward rare, long-tail situations that are not represented in the training distribution. This is exactly where generalization matters most. Simulators like CARLA are ideal for evaluating these cases in a safe and cost-effective setting. However, current CARLA benchmarks often fail to leverage this potential, as they evaluate on the same scenarios used during training. Fail2Drive addresses this limitation by introducing truly unseen scenarios and establishing a concrete, reproducible basis for evaluating generalization, exposing widespread overfitting in recent CARLA models and making generalization quantifiable.

Threefold contribution

Benchmark

Public benchmark with 200 routes serves as a fair, model independent comparison and enables systematic work on generalization.

Analysis

Evaluation of seven models reveals widespread shortcut learning, brittle visual cues, and missing fallback behavior. Inspiring avenues for future work.

Toolbox

Extensible scenarios, assets, and expert policy enable community-driven customization.

Benchmark

Controlled evaluation

All generalization scenarios are paired with an in-distribution baseline to isolate generalization performance.

Unseen Scenarios

CARLA uses the same scenarios for training and testing. Fail2Drive adds unseen generalization scenarios to measure the true generalization potential of current models.

Analysis

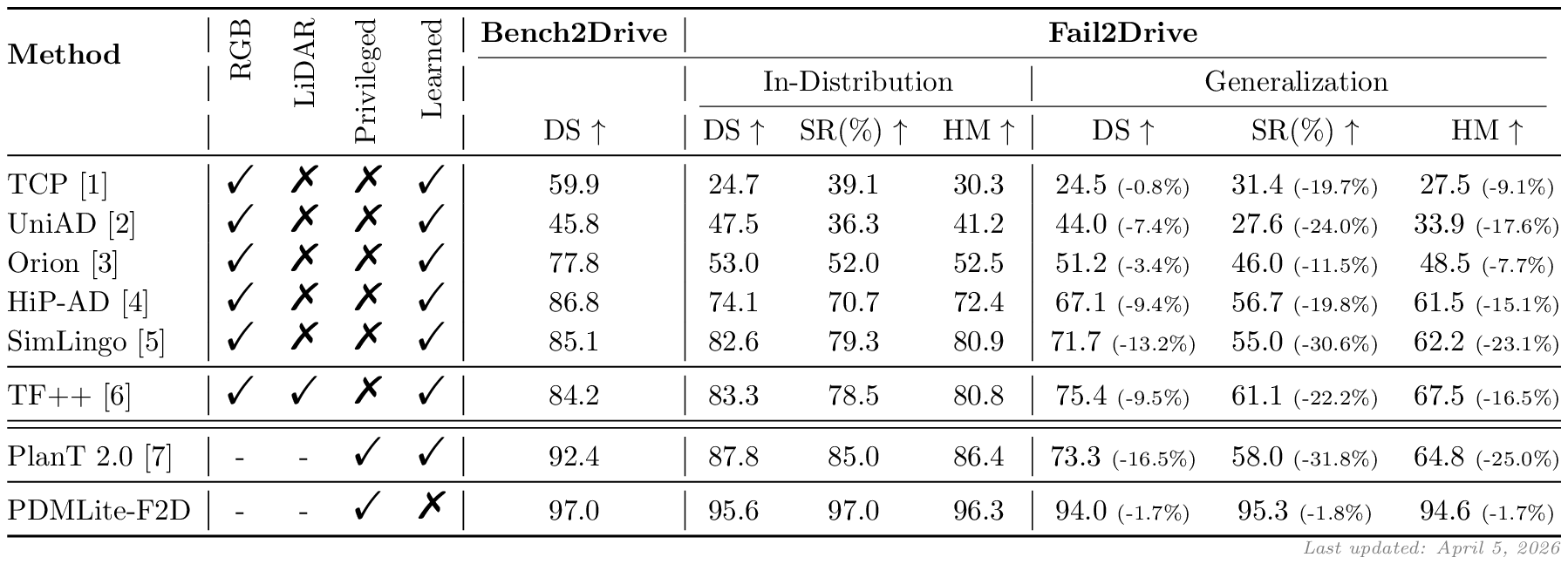

We analyze seven recent driving models, revealing significant overfitting and weak generalization to unseen long-tail scenarios.

Qualitative Failures

Quantitative Evaluation

Fail2Drive makes generalization failures measurable. The quantitative metrics capture the gap between in-distribution and unseen scenarios and show that breakdowns are widespread across recent state-of-the-art models.

Leaderboard

Fail2Drive maintains an official leaderboard of driving methods to ensure fair and consistent comparison across approaches.

We encourage users to share their results in the leaderboard repository:

Submit results via GitHub →

Toolbox

The Fail2Drive toolbox provides tools for researchers and practitioners to create and share custom long-tail scenarios. The toolbox is designed to make scenario authoring lightweight, reproducible, and easy to integrate into evaluation workflows.

Design your own scenario

Drag an object into the scene, place it anywhere, and rotate it. Drag objects outside the scene to delete them.

Download Fail2Drive and drive through your custom scenario in the simulator.

Maps

Objects

Construction cone

Construction cone

Street barrier

Street barrier

Food cart

Food cart

Trash bin

Trash bin

Plastic chair

Plastic chair

Community extension

Fail2Drive is designed to grow with the community. The toolbox empowers researchers to easily create custom routes and scenarios tailored to their specific needs, as well as expand the existing benchmark. Together, Fail2Drive provides a unified and rigorous testing ground for evaluating generalization in CARLA. We welcome code contributions and offer a scenario hub for sharing and discovering interesting scenarios.

Citation

@misc{gerstenecker2026fail2drivebenchmarkingclosedloopdriving,

title={Fail2Drive: Benchmarking Closed-Loop Driving Generalization},

author={Simon Gerstenecker and Andreas Geiger and Katrin Renz},

year={2026},

eprint={2604.08535},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2604.08535},

}